Windows Agent Arena (WAA) 🪟 is a scalable Windows AI agent platform for testing and benchmarking multi-modal, desktop AI agents. WAA provides researchers and developers with a reproducible and realistic Windows OS environment for AI research, where agentic AI workflows can be tested across a diverse range of tasks.

WAA supports the deployment of agents at scale using the Azure ML cloud infrastructure, allowing for the parallel running of multiple agents and delivering quick benchmark results for hundreds of tasks in minutes, not days.

WAA.Intro.mp4

- 2024-11-10: We added a new difficulty mode for Windows Agent Arena! You can try the new harder difficulty mode by changing the default

diff_lvl="normal"todiff_lvl="hard"insrc/win-arena-container/start_client.sh. Under the harder difficulty, in many tasks, agents must also learn to initialize/set up the task themselves (e.g., finding and opening the right program/application for the task) rather than have the task "set up" for them by the task config. - 2024-10-30: We released the code for our Navi agent with Omniparser! For the top performing mode in the paper, run

./run-local.sh --som-origin mixed-omni --gpu-enabled true - 2024-10-23: Microsoft open-sourced Omniparser, the current top performing screen understanding model in our benchmark.

- 2024-09-13: We released our paper, code, project page, and blog post. Check it out!

Our technical report paper can be found here. If you find this environment useful, please consider citing our work:

@article{bonatti2024windows,

author = { Bonatti, Rogerio and Zhao, Dan and Bonacci, Francesco and Dupont, Dillon, and Abdali, Sara and Li, Yinheng and Wagle, Justin and Koishida, Kazuhito and Bucker, Arthur and Jang, Lawrence and Hui, Zack},

title = {Windows Agent Arena: Evaluating Multi-Modal OS Agents at Scale},

institution = {Microsoft},

year = {2024},

month = {September},

}

- Docker daemon installed and running. On Windows, we recommend using Docker with WSL 2.

- An OpenAI or Azure OpenAI API Key.

- Python 3.9 - we recommend using Conda and creating an adhoc python environment for running the scripts. For creating a new environment run

conda create -n winarena python=3.9.

Clone the repository and install dependencies:

git clone https://github.com/microsoft/WindowsAgentArena.git

cd WindowsAgentArena

# Install the required dependencies in your python environment

# conda activate winarena

pip install -r requirements.txtCreate a new config.json at the root of the project with the necessary keys (from OpenAI or Azure endpoints):

{

"OPENAI_API_KEY": "<OPENAI_API_KEY>", // if you are using OpenAI endpoint

"AZURE_API_KEY": "<AZURE_API_KEY>", // if you are using Azure endpoint

"AZURE_ENDPOINT": "https://yourendpoint.openai.azure.com/", // if you are using Azure endpoint

}To get started, pull the base image from Docker Hub:

docker pull windowsarena/winarena-base:latestThis image includes all the necessary dependencies (such as packages and models) required to run the code in the src directory.

Next, build the WinArena image locally:

cd scripts

./build-container-image.sh

# If there are any changes in 'Dockerfile-WinArena-Base', use the --build-base-image flag to build also the base image locally

# ./build-container-image.sh --build-base-image true

# For other build options:

# ./build-container-image.sh --helpThis will create the windowsarena/winarena:latest image with the latest code from the src directory.

WAA.Prepare.Golden.Image.mp4

- Visit Microsoft Evaluation Center, accept the Terms of Service, and download a Windows 11 Enterprise Evaluation (90-day trial, English, United States) ISO file [~6GB]

- After downloading, rename the file to

setup.isoand copy it to the directoryWindowsAgentArena/src/win-arena-container/vm/image

Before running the arena, you need to prepare a new WAA snapshot (also referred as WAA golden image). This 30GB snapshot represents a fully functional Windows 11 VM with all the programs needed to run the benchmark. This VM additionally hosts a Python server which receives and executes agent commands. To learn more about the components at play, see our local and cloud components diagrams.

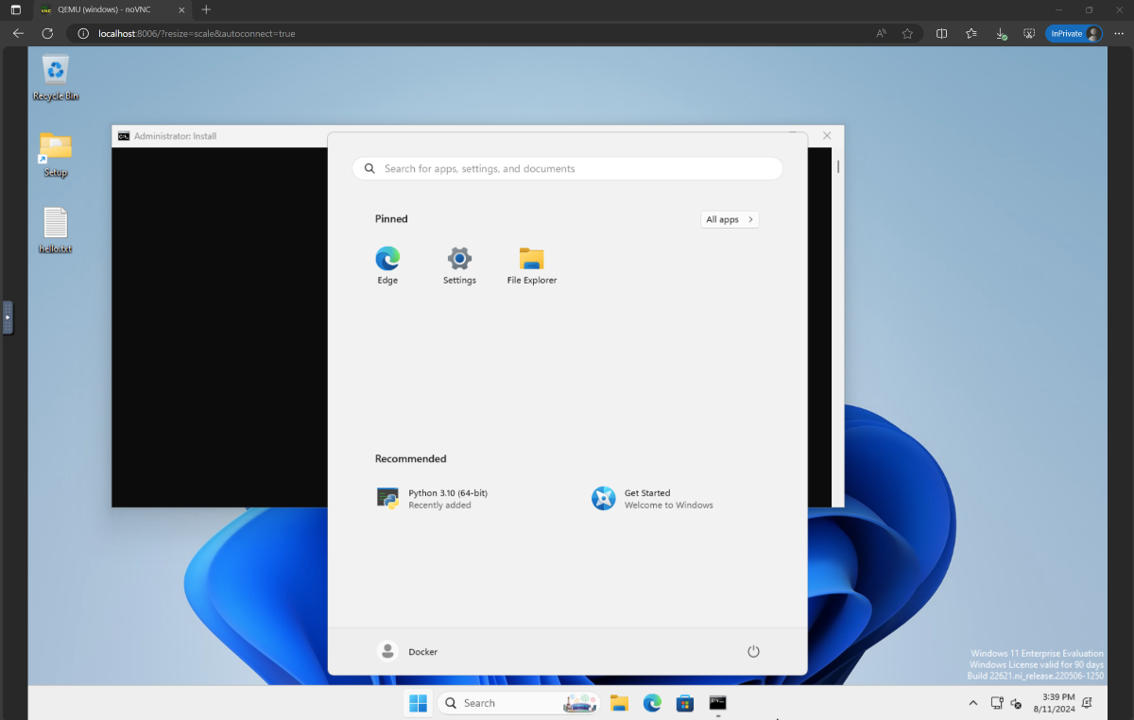

To prepare the gold snapshot, run once:

cd ./scripts

./run-local.sh --prepare-image trueYou can monitor progress at http://localhost:8006. The preparation process is fully automated and will take ~20 minutes.

Please do not interfere with the VM while it is being prepared. It will automatically shut down when the provisioning process is complete.

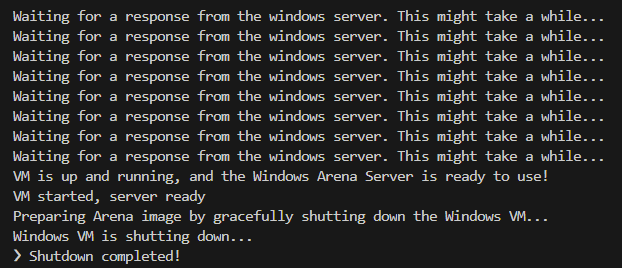

At the end, you should expect the Docker container named winarena to gracefully terminate as shown from the below logs.

You will find the 30GB WAA golden image in WindowsAgentArena/src/win-arena-container/vm/storage, consisting of the following files:

- During development, if you want to include any changes made in the

src/win-arena-containerdirectory in the WAA golden image, please ensure to specify the flag--skip-build falseto therun-local.shscript (default to true). This will ensure that a new container image is built instead than using the prebuiltwindowsarena/winarena:latestimage. - If you have previously run an installation process and want to do it again from scratch, make sure to delete the content of

storage. - We recommend copying this

storagefolder to a safe location outside of the repository in case you or the agent accidentally corrupt the VM at some point and you want to avoid a fresh setup. - Depending on your docker settings, you might have to run the above command with

sudo. - Running on WSL2? If you encounter the error

/bin/bash: bad interpreter: No such file or directory, we recommend converting the bash scripts from DOS/Windows format to Unix format:

cd ./scripts

find . -maxdepth 1 -type f -exec dos2unix {} +You're now ready to launch the evaluation. To run the baseline agent on all benchmark tasks, do:

cd scripts

./run-local.sh

# For client/agent options:

# ./run-local.sh --helpOpen http://localhost:8006 to see the Windows VM with the agent running. If you have a beefy PC, you can instead run the strongest agent configuration in our paper by doing:

./run-local.sh --gpu-enabled true --som-origin mixed-omni --a11y-backend uiaAt the end of the run you can display the results using the command:

cd src/win-arena-container/client

python show_results.py --result_dir <path_to_results_folder>Below is a comparison of various combinations of hyperparameters used by the Navi agent in our study, which can be overridden by specifying --som-origin <som_origin> --a11y-backend <a11y_backend> when running the run-local.sh script:

| Command | Description | Notes |

|---|---|---|

./run-local.sh --som-origin mixed-omni --a11y-backend uia |

Combines Omniparser with accessibility tree information | ⭐Recommended for best results |

./run-local.sh --som-origin omni |

Uses Omniparser for screen understanding | |

./run-local.sh --som-origin oss |

Uses webparse, groundingdino, and OCR (TesseractOCR) | 🌲Baseline |

./run-local.sh --som-origin a11y --a11y-backend uia |

Uses slower, more accurate accessibility tree | |

./run-local.sh --som-origin a11y --a11y-backend win32 |

Uses faster, less accurate accessibility tree | 🐇Fastest |

./run-local.sh --som-origin mixed-oss --a11y-backend uia |

Combines oss detections with accessibility tree |

--som-origindetermines how the Navi agent detects screen elements--a11y-backendspecifies the Accessibility backend type (when usinga11yor mixed modes)

At first sight it might seem challenging to develop/debug code running inside the docker container. However, we provide a few tips to make this process easier. Check the Development-Tips Doc for more details such as:

- How to attach a VSCode window (with debugger) to the running container

- How to change the agent and Windows server code from your local machine and see the changes reflected in real time in the container

We offer a seamless way to run the Windows Agent Arena on Azure ML Compute VMs. This option will significantly reduce the time needed to test your agent in all benchmark tasks from hours/days to minutes.

- If you don't already have an Azure subscription, you can start a free trial. Take note of the subscription id, we will use it as

AZURE_SUBSCRIPTION_IDin Section 3. - In the Azure portal, create a new resource group (e.g.

agents) in the region of your choice. Take note of the resource group name, we will use it asAZURE_ML_RESOURCE_GROUPin Section 3. - Within this resource group, create an Azure Machine Learning resource (e.g. name it

agents_ml). Take note of the ML workspace name, we will use it asAZURE_ML_WORKSPACE_NAMEin Section 3. During the creation wizard, make sure to check the boxes for automatically creating new:- Storage Account. Note: Take note of the Storage Account name, we will use it to upload the golden image in Section 2.

- Key vault.

- Application Insights.

- [optional] Container Registry. You can use the Azure Container Registry to privately store your custom docker images without the need to push them to the public Docker Hub.

- Once creation is complete, navigate to the Azure Machine Learning portal and click on your workspace (

agents)

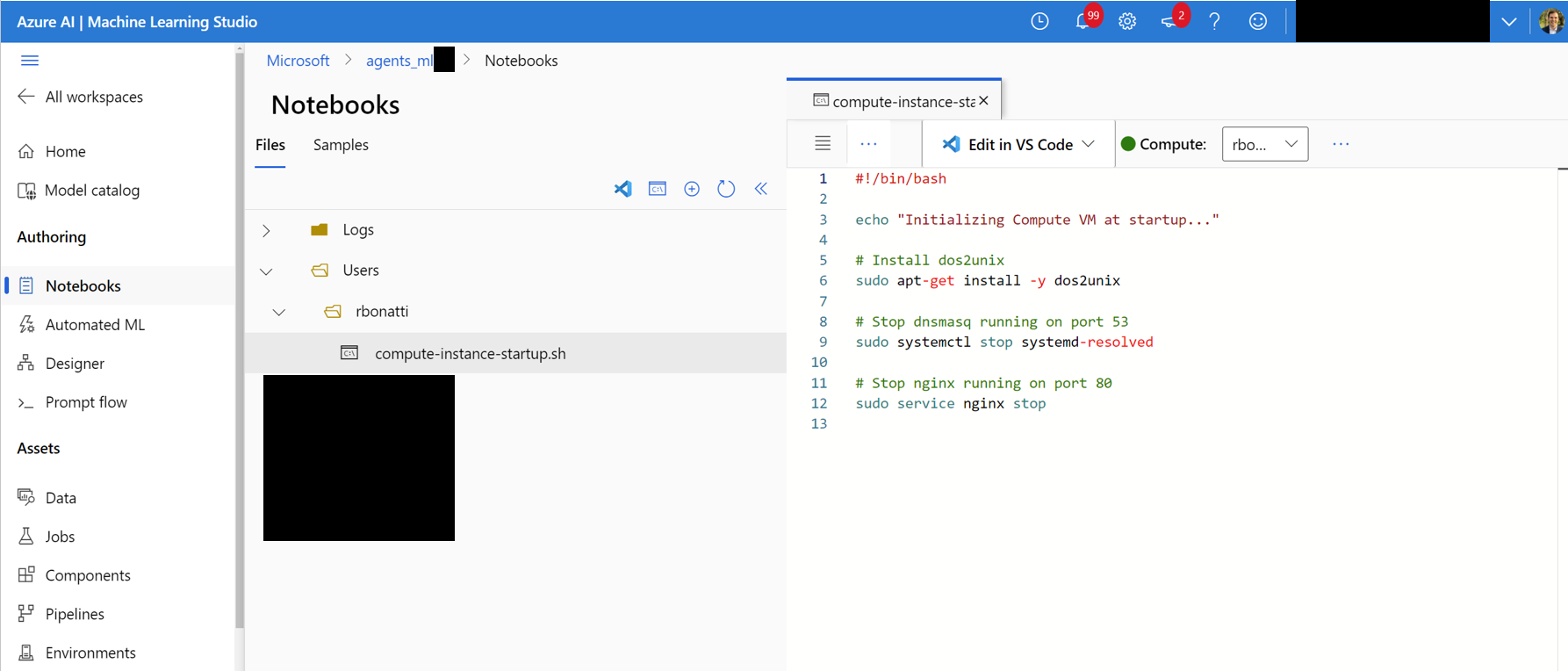

- In the workspace, navigate to the

Notebookstab. In your user-assigned folder (as shown in the figure below), create a new bash (.sh) file namedcompute-instance-startup.sh. Copy the content ofscripts/azure_files/compute-instance-startup.shinto this file and save it. This script will be used every time a new VM is launched in Azure to apply some base configurations. Take note of the path where you save the file (in the form ofUsers/<YOUR_USER>/compute-instance-startup.sh), we will use it to run the script in Section 3.

- [Optional] You might want to ask for more compute quota for your region depending on your needs. You can do so by navigating to the Azure Quota page. As a reference, we currently use the

Standard_D8_v3VM size for our benchmarking, which falls under theStandard Dv3 Family Cluster Dedicated vCPUscategory. Each VM uses 8 cores. Make sure the machine type you use supports nested virtualization.

-

Upload the Windows 11 storage folder to the Blob container associated with your default datastore. By default, the Azure ML Workspace's underlying data is backed by a Storage Account through one or more ML datastores. The default datastore, named

workspaceblobstore, is created during the workspace setup and linked to a Blob container under the Azure Storage Account. You can review the association between the datastores and containers by visiting Azure ML Datastore. Once found, you can then upload the storage folder in different ways:- Download the Azure Storage Explorer program, log in, and select the blob container. Upload the

WindowsAgentArena/src/win-arena-container/vm/storagefolder from your local machine after running the local setup steps. - Alternatively, you can use the Azure CLI to upload the folder. To install the CLI, follow the steps provided here. Once installed, you can use the following command:

az login --use-device-code # Only needed if prompted az storage blob upload-batch --account-name <STORAGE_ACCOUNT_NAME> --destination <CONTAINER_NAME> --source <LOCAL_FOLDER> # For a list of parameters check: https://docs.microsoft.com/en-us/cli/azure/storage/blob?view=azure-cli-latest

- Alternatively, use the Azure portal interface to upload the folder. Navigate to the storage account, click on

Storage browser->Blob containers, select your container, and upload the folder. This option is not recommended for large files as connections might get unstable.

- Download the Azure Storage Explorer program, log in, and select the blob container. Upload the

-

[Optional] If you are not using the default

windowsarena/winarena:latestimage, you can upload your custom image to the Azure Container Registry. You can do so by following the Azure Container Registry documentationaz login --use-device-code # potentially needed if commands below don't work: az acr login --name <ACR_NAME> docker login # you will be prompted to enter your ACR credentials (username + password which can be found in the Azure portal) docker tag <IMAGE_NAME> <ACR_NAME>.azurecr.io/<IMAGE_NAME>:<TAG> docker push <ACR_NAME>.azurecr.io/<IMAGE_NAME>:<TAG>

- Add the additional keys to the

config.jsonfile at the root of the project:

{

... // Your previous configs

"AZURE_SUBSCRIPTION_ID": "<YOUR_AZURE_SUBSCRIPTION_ID>",

"AZURE_ML_RESOURCE_GROUP": "<YOUR_AZURE_ML_RESOURCE_GROUP>",

"AZURE_ML_WORKSPACE_NAME": "<YOUR_AZURE_ML_WORKSPACE_NAME>"

}- Create a new file named

experiments.jsonto specify any parameters needed for each experiment run, including the agent to deploy and the underlying LLM model to use. You can find a referenceexperiments.jsonconsisting of multiple experiments to run atscripts/experiments.json:

{

"experiment_1": {

"ci_startup_script_path": "Users/<YOUR_USER>/compute-instance-startup.sh", // As seen in Section 1

"agent": "navi",

"datastore_input_path": "storage",

"docker_img_name": "windowsarena/winarena:latest",

"exp_name": "experiment_1",

"num_workers": 4,

"use_managed_identity": false,

"json_name": "evaluation_examples_windows/test_all.json",

"model_name": "gpt-4-1106-vision-preview",

"som_origin": "oss", // or a11y, or mixed-oss

"a11y_backend": "win32" // or uia

}

// ...

}- (Optional) You can also generate the

experiments.jsonby using both the--experiments_jsonand--update_jsonparameters ofrun_azure.py, the above JSON is equivalent to the following command:

cd scripts

python run_azure.py --experiments_json "experiments.json" --update_json --exp_name "experiment_1" --ci_startup_script_path "Users/<YOUR_USER>/compute-instance-startup.sh" --agent "navi" --json_name "evaluation_examples_windows/test_all.json" --num_workers 4 --som_origin oss --a11y_backend win32- Deploy the agent on Azure ML Compute by running:

az login --use-device-code # https://learn.microsoft.com/en-us/cli/azure/install-azure-cli

# If multiple tenants or subscriptions, make sure to select the right ones with:

# az login --use-device-code --tenant "<YOUR_AZURE_AD_TENANT_ID>"

# az account set --subscription "<YOUR_AZURE_AD_TENANT_ID>"

# Make sure you have installed the python requirements in your conda environment

# conda activate winarena

# pip install -r requirements.txt

# From your activated conda environment:

cd scripts

python run_azure.py --experiments_json "experiments.json"For any unfinished experiments in experiments.json, the script will:

- Create

<num_workersAzure Compute Instance VMs. - Run one ML Training Job named

<exp_name>per VM. - Dispose the VMs once the jobs are completed.

The logs from the run will be saved in a agent_outputs folder in the same blob container where you uploaded the Windows 11 image. You can download the agent_outputs folder to your local machine and run the show_azure.py script to see the results from every experiment as a markdown table.

cd scripts

python show_azure.py --json_config "experiments.json" --result_dir <path_to_downloaded_agent_outputs_folder>Want to test your own agents in Windows Agent Arena? You can use our default agent as a template and create your own folder under src/win-arena-container/client/mm_agents. You just need to make sure that your agent.py file features predict() and reset() functions. For more information on agent development check out the BYOA Doc.

We welcome contributions to the Windows Agent Arena project. In particular, we welcome:

- New open-sourced agents to be added to the benchmark

- New tasks to be added to our existing categories, or new categories altogether

If you are interested in contributing, please check out our Task Development Guidelines.

| Component | Cost | Time |

|---|---|---|

| Azure Standard_D8_v3 VM | ~$8 ($0.38/h * 40 * 0.5h) | |

| GPT-4V | $100 | ~35min with 40 VMs |

| GPT-4o | $100 | ~35min with 40 VMs |

| GPT-4o-mini | $15 | ~30min with 40 VMs |

By default, the run-local.sh script attempts to create a QEMU VM with 8 GB of RAM and 8 CPU cores. If your system has limited resources, you can override these defaults by specifying the desired RAM and CPU allocation:

./run-local.sh --ram-size 4G --cpu-cores 4If your system does not support KVM acceleration, you can disable it by specifying the --use-kvm false flag:

./run-local.sh --use-kvm falseNote that running the benchmark locally without KVM acceleration is not recommended due to performance issues. In this case, we recommend preparing the golden image for later running the benchmark on Azure.

- OS World for the original benchmark task framework.

- Dockur for the Docker infrastructure underlying WAA.

- GroundingDINO for the object detection module in our Navi Agent.

- NotebookLM for our AI-generated podcast.

This project welcomes contributions and suggestions. Most contributions require you to agree to a Contributor License Agreement (CLA) declaring that you have the right to, and actually do, grant us the rights to use your contribution. For details, visit https://cla.opensource.microsoft.com.

When you submit a pull request, a CLA bot will automatically determine whether you need to provide a CLA and decorate the PR appropriately (e.g., status check, comment). Simply follow the instructions provided by the bot. You will only need to do this once across all repos using our CLA.

This project has adopted the Microsoft Open Source Code of Conduct. For more information see the Code of Conduct FAQ or contact opencode@microsoft.com with any additional questions or comments.

This project may contain trademarks or logos for projects, products, or services. Authorized use of Microsoft trademarks or logos is subject to and must follow Microsoft's Trademark & Brand Guidelines. Use of Microsoft trademarks or logos in modified versions of this project must not cause confusion or imply Microsoft sponsorship. Any use of third-party trademarks or logos are subject to those third-party's policies.