Project Website | Paper | RSS 2024

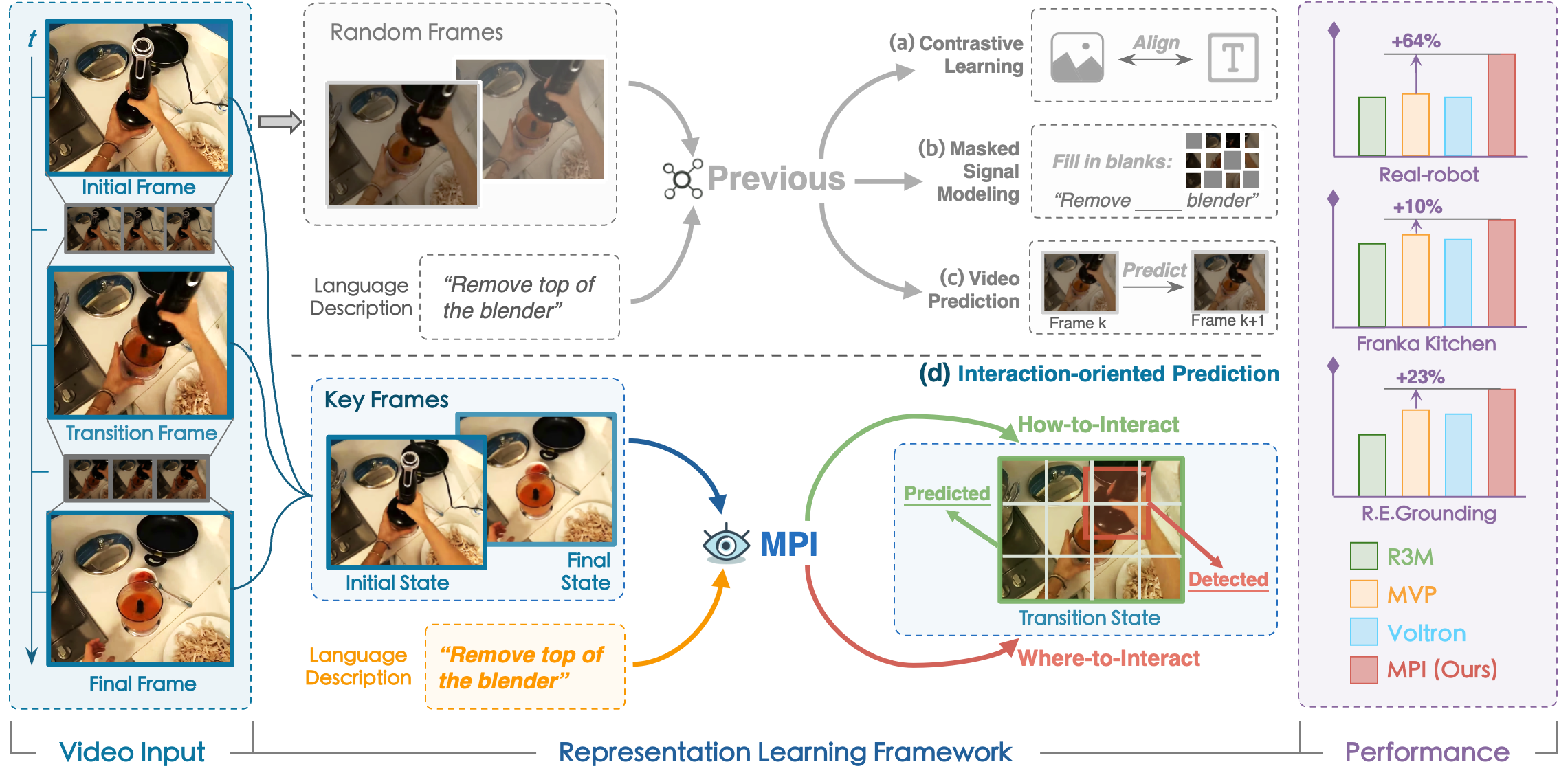

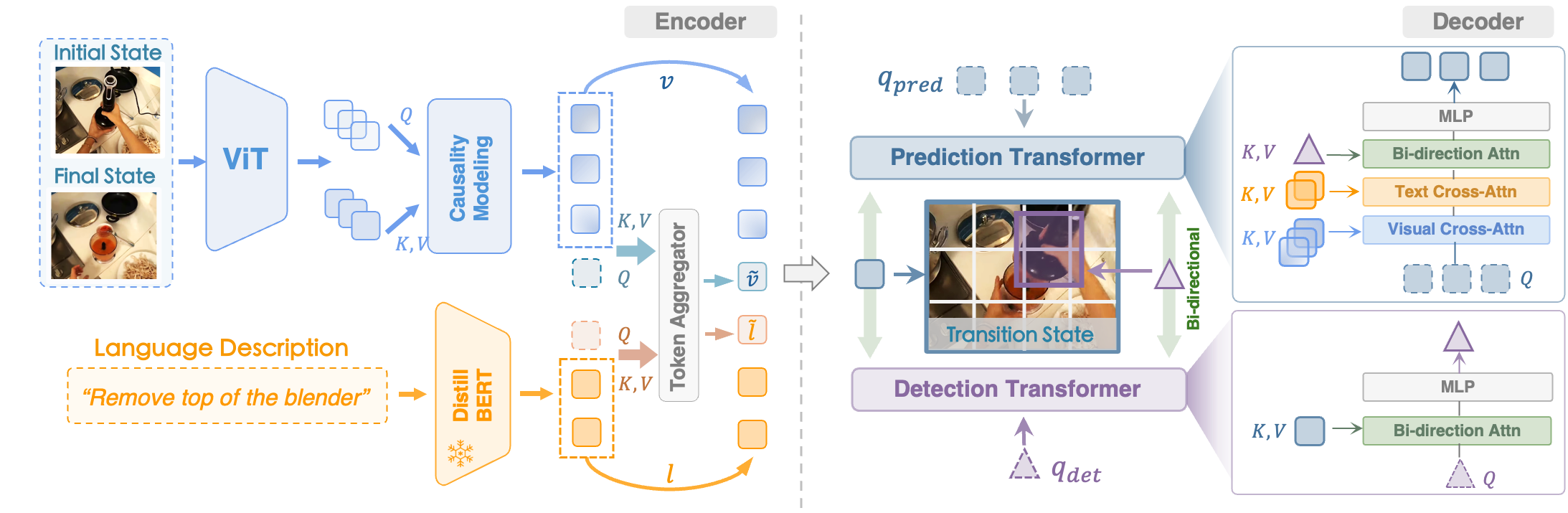

MPI is an interaction-oriented representation learning method towards robot manipulation:

- Instruct the model towards predicting transition frames and detecting manipulated objects with keyframes.

- Foster better comprehension of “how-to-interact” and “where-to-interact”.

- Acquire more informative representations during pre-training and achieve evident improvement across downstream tasks.

Real-world robot experiments on complex kitchen environment.

| Take the spatula off the shelf (2x speed) | Lift up the pot lid (2x speed) |

take_the_spatula_2.mp4 |

lift_up_the_pot_lid_2.mp4 |

| Close the drawer (2x speed) | Put pot into sink (2x speed) |

close_drawer_2.mp4 |

mov_pot_into_sink_2.mp4 |

- [2024/05/31] We released the implementation of pre-training and evaluation on Referring Expression Grounding task.

- [2024/06/04] We released our paper on arXiv.

- [2024/06/16] We released the model weights.

- [2024/07/05] We released the evaluation code on Franka Kitchen environment.

Step 1. Clone and setup MPI dependency:

git clone https://github.com/OpenDriveLab/MPI

cd MPI

pip install -e .Step 2. Prepare the language model, you may download DistillBERT from HuggingFace

To directly utilize MPI for extracting representations, please download our pre-trained weights:

| Model | Checkpoint | Params. | Config |

|---|---|---|---|

| MPI-Small | GoogleDrive | 22M | GoogleDrive |

| MPI-Base | GoogleDrive | 86M | GoogleDrive |

Your directory tree should look like this:

checkpoints

├── mpi-small

| |—— MPI-small-state_dict.pt

| └── MPI-small.json

└── mpi-base

|—— MPI-base-state_dict.pt

└── MPI-base.json

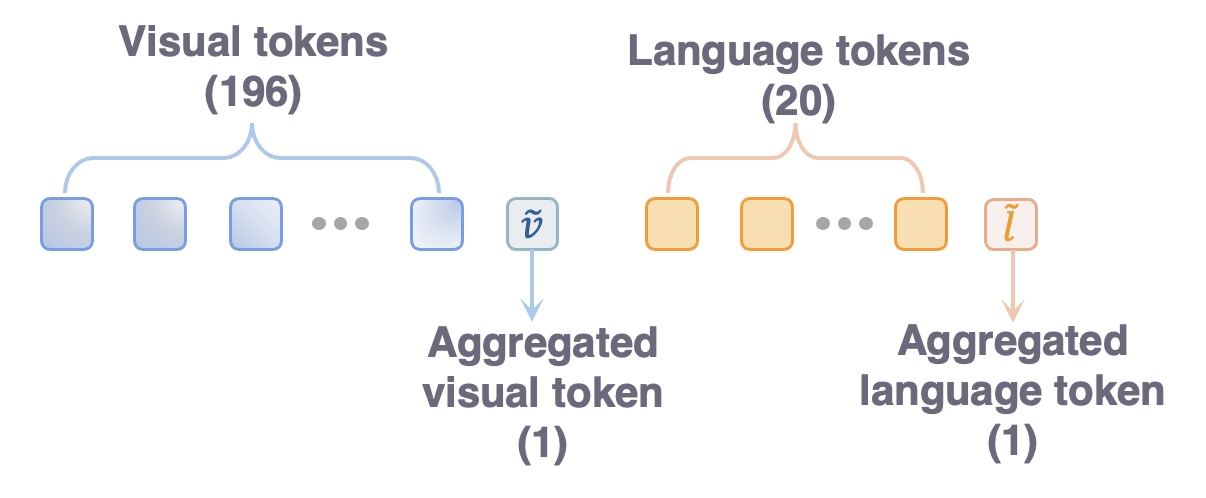

We provide a example code get_representation.py to show how to obtain the pre-trained MPI features. The MPI encoder by default requires two images as input. In downstream tasks, we simply replicate the current observation to ensure compatibility.

The following diagram presents the composition and arrangement of the extracted tokens:

Download Ego4D Hand-and-Object dataset:

# Download the CLI

pip install ego4d

# Select Subset Of Hand-and-Object

python -m ego4d.cli.cli --output_directory=<path-to-save-dir> --datasets clips annotations --metadata --version v2 --benchmarks FHO

Your directory tree should look like this:

$<path-to-save-dir>

├── ego4d.json

└── v2

|—— annotations

└── clips

Preprocess dataset for pre-training MPI:

python prepare_dataset.py --root_path <path-to-save-dir>/v2/

torchrun --standalone --nnodes 1 --nproc-per-node 8 pretrain.pyStep 1. Prepare the OCID-Ref dataset following this repo. Then put the dataset to

./mpi_evaluation/referring_grounding/data/langrefStep 2. Initiate evaluation with

python mpi_evaluation/referring_grounding/evaluate_refer.py test_only=False iou_threshold=0.5 lr=1e-3 \

model=\"mpi-small\" \

save_path=\"MPI-Small-IOU0.5\" \

eval_checkpoint_path=\"path_to_your/MPI-small-state_dict.pt\" \

language_model_path=\"path_to_your/distilbert-base-uncased\" \or you can simply use

bash mpi_evaluation/referring_grounding/eval_refer.shFollowing the guidebook to setup Franka Kitchen environment and download the expert demonstrations.

Evaluating visuomotor control on Franka Kitchen environment with 25 expert demonstration.

CUDA_VISIBLE_DEVICES=0 PYTHONPATH=mpi_evaluation/franka_kitchen/MPIEval/core python mpi_evaluation/franka_kitchen/MPIEval/core/hydra_launcher.py hydra/launcher=local hydra/output=local env="kitchen_knob1_on-v3" camera="left_cap2" pixel_based=true embedding=ViT-Small num_demos=25 env_kwargs.load_path=mpi-small bc_kwargs.finetune=false job_name=mpi-small seed=125 proprio=9If you find the project helpful for your research, please consider citing our paper:

@article{zeng2024learning,

title={Learning Manipulation by Predicting Interaction},

author={Zeng, Jia and Bu, Qingwen and Wang, Bangjun and Xia, Wenke and Chen, Li and Dong, Hao and Song, Haoming and Wang, Dong and Hu, Di and Luo, Ping and others},

journal={arXiv preprint arXiv:2406.00439},

year={2024}

}The code of this work is built upon Voltron and R3M. Thanks for their open-source work!